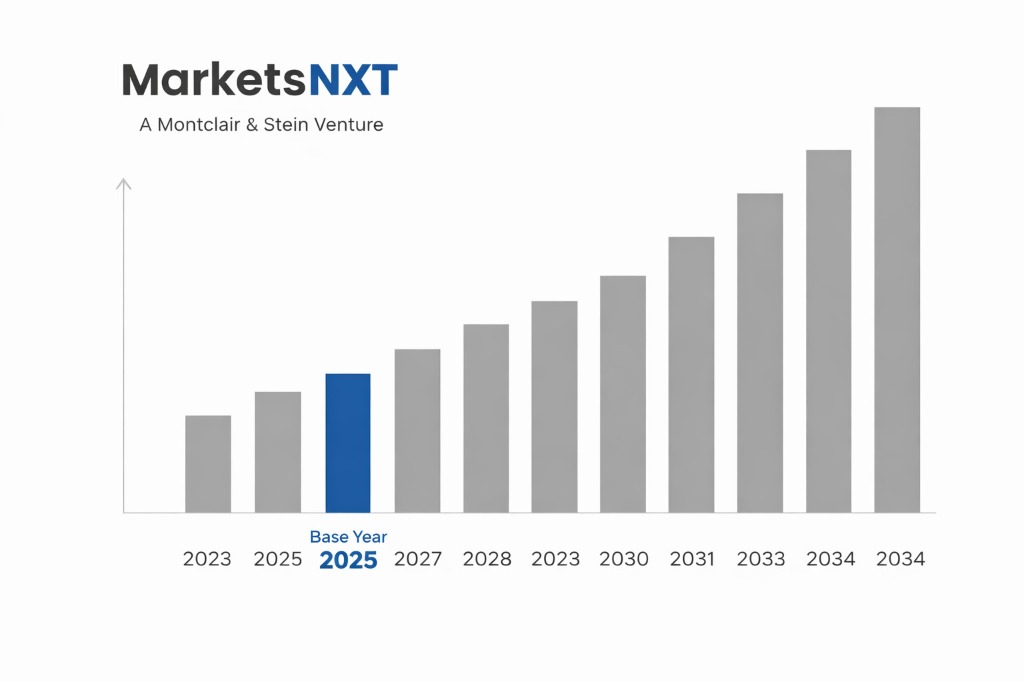

U.S. AI Chip Market Size, Share & Forecast 2026–2034

Report Highlights

- ✓Market Size 2024: Approximately USD 78.6 billion

- ✓Market Size 2034: Approximately USD 312.4 billion

- ✓CAGR Range: 14.8%–16.5%

- ✓Market Definition: AI accelerator chips and supporting semiconductor infrastructure in the US — GPUs, NPUs, and custom ASICs — for data centre and edge AI inference.

- ✓Key Market Highlight: NVIDIA's H100/H200 GPU commands 90%+ of the AI training accelerator market — US export controls on advanced AI chips to China under BIS rules are simultaneously protecting US AI chip market dominance and restructuring global AI compute geography.

- ✓Top 5 Companies: NVIDIA Corporation, Advanced Micro Devices (AMD), Intel, Google (TPU), Qualcomm

- ✓Base Year: 2025

- ✓Forecast Period: 2026–2034

- ✓Contrarian Insight: NVIDIA's H100/H200 GPU commands 90%+ of the AI training accelerator market — US export controls on advanced AI chips to China under BIS rules are simultaneously protecting US AI chip market dominance and restructuring global AI compute geography.

Industry Snapshot

The United States AI Chip market was valued at approximately USD 78.6 billion in 2024 and is projected to reach approximately USD 312.4 billion by 2034, growing at a CAGR of 14.8%–16.5% over the forecast period. The US market is the world's largest AI chip consumption market by revenue and the dominant market by design innovation — essentially every leading AI chip architecture (NVIDIA H100/H200/B200, AMD MI300, Google TPU v4/v5, Amazon Trainium/Inferentia, Apple Neural Engine) is designed by US-headquartered companies. Fabrication is geographically distinct from design: approximately 90%+ of advanced AI chips are fabricated at TSMC Taiwan, with Intel's Ohio and Arizona fabs (IFS) and planned TSMC Arizona capacity representing the only US-territory fabrication for leading-edge nodes.

The competitive landscape is structurally dominated by NVIDIA, whose CUDA software ecosystem and H100/H200 GPU architecture created an approximately 70%–80% share of the AI training accelerator market by revenue through 2024. NVIDIA's competitive moat is not primarily hardware — AMD's MI300X delivers comparable raw compute performance — but software: the CUDA ecosystem with 3 million+ developers and integration with every major deep learning framework creates switching costs that are 2–3 years of engineering investment for a hyperscaler to reduce, not eliminate. The competitive dynamics are being reshaped by three simultaneous forces: hyperscaler custom silicon development (Google TPU, Amazon Trainium, Microsoft Maia, Meta MTIA), AMD ROCm software ecosystem investment, and the emergence of AI-inference-optimised architectures from Cerebras, Groq, and Sambanova challenging the GPU paradigm for specific workloads.

Competitive Intensity Assessment

The US AI chip market competes across five dimensions with distinct intensity levels. Active competitors: approximately 25–35 companies designing AI accelerators for data centre applications are at various commercialisation stages, but effective competition at hyperscaler scale is limited to NVIDIA, AMD, and hyperscaler internal silicon teams — below the design layer, contract manufacturers TSMC and (increasingly) Intel Foundry Services face a different competitive dynamic with 2–3 credible options at leading-edge nodes. Price competition: at the AI training GPU tier, NVIDIA holds sufficient pricing power to maintain H100 GPU lease rates of USD 2.50–3.50/hr at hyperscaler scale — AMD's MI300X alternative is priced 15%–20% below NVIDIA but has failed to move market share materially, indicating software ecosystem switching costs dominate price signals. Product differentiation: NVIDIA differentiates primarily through the NVLink interconnect, NVSwitch fabric, and CUDA software stack — AMD differentiates through open ROCm compatibility with multiple frameworks, Intel differentiates through Gaudi 3 on-chip memory bandwidth architecture. Switching costs: highest in the industry — migrating AI training pipelines from CUDA to ROCm requires 12–24 months of engineering effort at major hyperscalers. Barriers to entry: extreme — new entrant AI chip design requires USD 500M–1B chip design investment, 3–4 year development cycles, and TSMC CoWoS packaging allocation that is currently constrained industry-wide.

The three companies whose competitive actions will most significantly reshape US AI chip market share through 2027 are: Google, whose TPU v5 deployment and growing TPU-as-a-service external offering through Google Cloud is creating a NVIDIA-alternative for LLM training at hyperscaler scale without CUDA dependency; AMD, whose ROCm 6.0 framework improvements and MI300X allocation at Microsoft Azure represent a concrete market share capture narrative for the first time; and Broadcom, whose custom ASIC design services for Google, Meta, and Apple position it as the enabling infrastructure for hyperscaler custom silicon transition — capturing GPU market share displacement without competing directly with NVIDIA.

Market Growth Drivers

US hyperscaler capital expenditure on AI infrastructure is the primary demand driver, with Microsoft, Google, Amazon, and Meta committing a combined USD 200–250 billion in infrastructure capex for 2024 — a substantial portion directed at AI compute clusters. OpenAI's Stargate initiative (with Microsoft and SoftBank) represents a USD 500 billion planned investment in US AI infrastructure through 2030, anchoring NVIDIA GPU procurement at a scale that will consume the majority of advanced CoWoS-packaged GPU supply available from TSMC for multiple years. US Department of Defense AI computing investment — including the JEDI successor JWCC cloud contract and dedicated AI hardware procurement under the DoD AI Strategy — provides a distinct demand channel growing at approximately 25%–30% annually, insulated from commercial cycle risk.

US export control policy is simultaneously a constraint and a structural competitive advantage. The October 2022 and October 2023 Bureau of Industry and Security (BIS) advanced chip export control rules — restricting A100, H100, and equivalently capable chips from Chinese customers — reduced NVIDIA's immediate China revenue (approximately 20%–25% of 2022 revenue) but created a structural bifurcation that concentrates premium AI chip demand in US, European, and allied markets where NVIDIA faces no restriction. The IRA and CHIPS Act together provide approximately USD 52 billion in semiconductor manufacturing and R&D investment — anchoring advanced fab development at Intel (Arizona, Ohio), TSMC Arizona, and Samsung Austin — reducing the US AI chip supply chain's geopolitical exposure over the 2025–2030 timeframe.

Market Restraints and Challenges

TSMC CoWoS packaging capacity constraint is the most immediate supply-side barrier to US AI chip market growth. NVIDIA's H100 and H200 GPU require TSMC's CoWoS-L (chip-on-wafer-on-substrate) advanced packaging, for which TSMC had approximately 15,000 wafers per month capacity in 2023 — constraining GPU unit production well below the demand NVIDIA's order book implied. TSMC is investing in CoWoS expansion (targeting 40,000–50,000 wpm by 2025–2026), but advanced packaging bottlenecks have historically constrained AI chip supply cycles for 12–24 months after demand surges. HBM (high-bandwidth memory) supply concentration at Samsung and SK Hynix adds a parallel supply constraint — HBM3E memory required for H200 and MI300X was supply-constrained through most of 2024.

Regulatory risk from antitrust scrutiny of NVIDIA's market position is an emerging governance constraint. The US Department of Justice opened an antitrust investigation into NVIDIA in 2024 focused on its CUDA ecosystem licensing practices and exclusive partnerships with cloud providers. While outcomes are uncertain, the investigation introduces legal cost, management distraction, and potential remedies that could require CUDA licensing changes — reducing NVIDIA's software moat and potentially accelerating AMD ROCm and other alternative ecosystem adoption. Energy consumption constraints from AI data centre expansion are increasingly material — AI data centres consume 5–10x more power per square foot than conventional data centres, and US grid capacity constraints in primary data centre markets (Northern Virginia, Arizona, Texas) are introducing 18–36 month interconnection queue delays for new large-scale AI data centre development.

Emerging Opportunities

Edge AI inference accelerator demand — in automotive AI (NVIDIA DRIVE, Qualcomm Snapdragon Ride, Mobileye EyeQ), industrial robotics, and consumer devices — represents a USD 15–25 billion incremental market segment by 2030 largely underpenetrated relative to data centre AI. Qualcomm's push into on-device AI with Snapdragon X Elite NPU architecture and Apple's Neural Engine integration in M-series silicon define the consumer edge; automotive AI for L2+ to L4 vehicles represents a structured procurement market growing at 30%+ annually. US government and defence AI chip demand is an explicitly protected domestic market — DoD procurement preferences, security clearance requirements, and classified programme requirements effectively exclude foreign suppliers from the defence AI compute sector, creating a structurally protected market for US-designed and US-assembled AI chips.

Regulatory and Policy Landscape

The CHIPS and Science Act (2022) provides USD 52.7 billion for semiconductor manufacturing incentives and R&D, administered by NIST and the Department of Commerce. CHIPS Act manufacturing grants require recipients to refrain from expanding advanced manufacturing capacity in countries of concern for 10 years — directly constraining TSMC, Samsung, and Intel from expanding Chinese AI chip capacity in exchange for US subsidy. BIS export controls on AI chips — applying performance thresholds of 4,800 TOPS at data type FP8 and tighter interconnect bandwidth limits under 2023 rules — will continue tightening as AI chip performance improves. The National AI Initiative Act and Executive Order 14110 (Safe and Trustworthy AI) establish federal AI governance frameworks that indirectly drive government procurement of US-designed AI computing infrastructure.

Leading Market Participants

- NVIDIA Corporation

- Advanced Micro Devices (AMD)

- Intel Corporation

- Google (Tensor Processing Unit Division)

- Qualcomm

- Amazon Web Services (Trainium/Inferentia)

- Microsoft (Maia ASIC)

- Broadcom

- Cerebras Systems

- Marvell Technology

Domestic vs. International Dynamics

US-headquartered companies design approximately 85%–90% of the global AI chip market by revenue — a design dominance that is structurally durable given the CUDA ecosystem's developer network effects, the concentration of AI research talent at US universities and hyperscalers, and the IP protection regime supporting chip architecture development. Domestically, US-designed chips face no meaningful import competition — Chinese AI chips (Huawei Ascend 910B, Cambricon) have no commercial penetration in US data centres, and domestic US chip design leaves no market gap for foreign suppliers. International revenue — primarily from Asian hyperscalers, European cloud operators, and GCC data centres — represents approximately 45%–55% of NVIDIA and AMD revenue, making export control policy the most significant variable in total market revenue realisation.

The balance is shifting in favour of US domestic demand as China market access contracts through BIS restrictions and as US hyperscaler capex expands. The Stargate initiative, Microsoft's domestic AI infrastructure investment, and the bifurcation of global AI infrastructure into US-allied and Chinese-domestic ecosystems is concentrating premium AI chip demand in the US-accessible market. International players — primarily Arm (UK, Softbank-owned) providing instruction set architecture and Samsung Foundry (Korea) providing alternative advanced fabrication — participate in the US market as enabling infrastructure rather than competing suppliers, maintaining the structural US chip design leadership through 2034 barring a transformation in the Chinese AI chip ecosystem that current BIS export controls are specifically designed to prevent.

Long-Term Market Perspective

Through 2034, the US AI chip market will maintain design leadership as the structural foundation of a market growing from USD 78.6 billion to USD 312.4 billion. The architecture trajectory — from GPU parallelism toward application-specific accelerators optimised for inference workloads — will create market openings for specialised AI chip designers beyond NVIDIA and AMD, benefitting the broader US fabless ecosystem. TSMC Arizona reaching 2nm production by 2026–2028 and Intel Foundry Services' 18A process maturation reduce US AI chip supply chain geographic concentration risk — the most significant supply chain vulnerability in the current architecture.

The macro scenario most significantly altering the growth trajectory is a shift in hyperscaler capital allocation from proprietary custom silicon toward standardised NVIDIA GPU infrastructure — or vice versa. If Google, Amazon, and Meta all successfully deploy custom silicon for 70%+ of their AI inference workloads by 2028, NVIDIA's addressable market contracts sharply toward training only, compressing total market size and shifting margin toward cloud infrastructure design capabilities. Conversely, if AI model scale requirements continue increasing faster than custom silicon can cost-effectively address, NVIDIA's GPU architecture advantage compounds through 2034, concentrating market value at an even higher level of market cap.

Frequently Asked Questions

Market Segmentation

- GPU AI Accelerators (Training and Inference)

- Custom ASICs and TPUs (Hyperscaler-Designed Silicon)

- Edge AI NPUs and Inference Chips

- Others (FPGA-Based AI Accelerators, Neuromorphic Chips)

- Hyperscale Data Centre AI Training

- Cloud AI Inference Services

- Automotive and Autonomous Systems

- Government and Defence AI Computing

- Consumer Device and Edge AI Applications

- Direct Hyperscaler Procurement (OEM Contracts)

- Cloud Marketplace (GPU-as-a-Service)

- Enterprise and OEM Channel Partners

- Government and Defence Procurement (GSA Schedule)

Table of Contents

Research Framework and Methodological Approach

Information

Procurement

Information

Analysis

Market Formulation

& Validation

Overview of Our Research Process

MarketsNXT follows a structured, multi-stage research framework designed to ensure accuracy, reliability, and strategic relevance of every published study. Our methodology integrates globally accepted research standards with industry best practices in data collection, modeling, verification, and insight generation.

1. Data Acquisition Strategy

Robust data collection is the foundation of our analytical process. MarketsNXT employs a layered sourcing model.

- Company annual reports & SEC filings

- Industry association publications

- Technical journals & white papers

- Government databases (World Bank, OECD)

- Paid commercial databases

- KOL Interviews (CEOs, Marketing Heads)

- Surveys with industry participants

- Distributor & supplier discussions

- End-user feedback loops

- Questionnaires for gap analysis

Analytical Modeling and Insight Development

After collection, datasets are processed and interpreted using multiple analytical techniques to identify baseline market values, demand patterns, growth drivers, constraints, and opportunity clusters.

2. Market Estimation Techniques

MarketsNXT applies multiple estimation pathways to strengthen forecast accuracy.

Bottom-up Approach

Aggregating granular demand data from country level to derive global figures.

Top-down Approach

Breaking down the parent industry market to identify the target serviceable market.

Supply Chain Anchored Forecasting

MarketsNXT integrates value chain intelligence into its forecasting structure to ensure commercial realism and operational alignment.

Supply-Side Evaluation

Revenue and capacity estimates are developed through company financial reviews, product portfolio mapping, benchmarking of competitive positioning, and commercialization tracking.

3. Market Engineering & Validation

Market engineering involves the triangulation of data from multiple sources to minimize errors.

Extensive gathering of raw data.

Statistical regression & trend analysis.

Cross-verification with experts.

Publication of market study.

Client-Centric Research Delivery

MarketsNXT positions research delivery as a collaborative engagement rather than a static information transfer. Analysts work with clients to clarify objectives, interpret findings, and connect insights to strategic decisions.