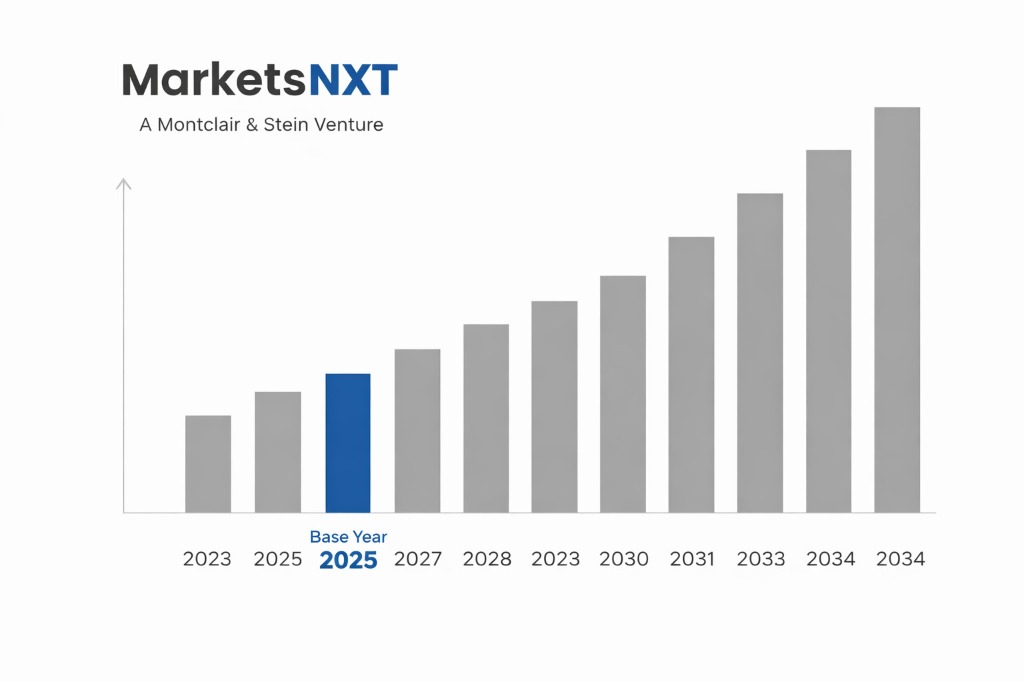

Autonomous Vehicle Sensor and Perception System Market Size, Share & Forecast 2026–2034

Report Highlights

- ✓Market Size 2024: USD 2.8 billion

- ✓Market Size 2034: USD 26.7 billion

- ✓CAGR: 27.6%

- ✓Market Definition: Sensors, perception systems, and sensor fusion platforms for autonomous and advanced driver-assistance vehicles, including LiDAR, radar, cameras, ultrasonic sensors, and integrated perception software for passenger vehicles, commercial trucks, robotaxis, and off-road autonomous equipment.

- ✓Leading Companies: Mobileye, Waymo, Hesai Technology, Luminar Technologies, Valeo

- ✓Base Year: 2025

- ✓Forecast Period: 2026–2034

Who Controls This Market — And Who Is Threatening That Control

Mobileye, the Intel-subsidiary (now independently listed) that pioneered computer vision-based ADAS, dominates the production ADAS sensor market with its EyeQ system-on-chip deployed in over 125 million vehicles across 50+ OEM customers. Its SuperVision and Chauffeur full-stack autonomous driving systems, using camera-centric perception supplemented by radar, represent the most widely deployed semi-autonomous driving systems at consumer vehicle production volumes. Waymo's sensor suite — including its custom Laser Bear Honeycomb LiDAR and fourth-generation custom radar — defines the state of the art for Level 4 autonomous driving perception, but Waymo's closed-system approach (not selling sensor components) limits its direct market participation in the component supply market even as it shapes performance benchmarks.

Chinese LiDAR manufacturers, led by Hesai Technology and Innovusion, have disrupted the LiDAR component market dramatically. Hesai's AT128 and QT128 LiDAR units are priced at USD 200–500 per unit — 80%–90% below the USD 5,000–75,000 pricing of first-generation LiDAR systems — and are deployed at volume in Chinese electric vehicles and robotaxis. Luminar Technologies, the US LiDAR developer with design-win commitments from Volvo, Mercedes-Benz, and Toyota, is competing on range (250 m versus Hesai's 150 m) and software integration rather than price. The threat to all sensor hardware vendors is the camera-only approach advocated by Tesla — whose Full Self-Driving system uses eight cameras and no LiDAR, arguing that human-level driving can be achieved with human-equivalent vision hardware if the AI software is sufficiently capable.

Industry Snapshot

Autonomous vehicle perception systems span three sensor modalities that each provide different information about the driving environment. LiDAR (Light Detection and Ranging) generates 3D point clouds with centimetre-level accuracy and performs reliably in low-light conditions but is sensitive to rain, fog, and snow, and remains the most expensive sensor modality. Radar provides velocity measurement and range in adverse weather conditions including precipitation that degrades LiDAR, with recent 4D imaging radar from companies including Arbe Robotics and Vayyar approaching the spatial resolution of lower-resolution LiDAR. Cameras provide the highest-resolution information about object appearance — traffic signs, lane markings, pedestrian behaviour — at the lowest cost per sensor, but require significant AI processing to convert pixel data to 3D scene understanding. Production autonomous vehicles for Levels 2–4 use fusion of all three modalities, with sensor fusion software weighting each modality's output based on conditions — the software layer is where the highest long-term margin concentration is occurring.

The Forces Accelerating Demand Right Now

Regulatory mandates for ADAS safety features are driving sensor market growth independent of autonomous driving ambitions. The EU's General Safety Regulation 2019/2144, requiring automatic emergency braking, lane-keeping assist, and driver drowsiness monitoring on all new vehicles sold in Europe from 2022, has created mandatory demand for the camera and radar sensors that underpin these systems — a demand signal that is production-volume-driven and decoupled from consumer willingness to pay for autonomous features. China's GB/T standards for intelligent connected vehicles and the MIIT's ADAS mandate for new vehicle type approvals have similarly expanded mandatory sensor content per vehicle. Commercial truck automation — where the economic case for autonomous highway driving is clearer than passenger vehicles and the regulatory approval pathway is more straightforward — is driving a separate high-growth sensor market led by Waymo Via, Aurora Innovation, and TuSimple deploying full sensor suites on Class 8 trucks.

What Is Holding This Market Back

Sensor cost remains a challenge for mass-market autonomous feature deployment — a full Level 3 sensor suite including solid-state LiDAR, 4D radar, and camera array adds USD 1,500–3,000 to vehicle bill of materials, a premium that only luxury and premium-segment vehicles can absorb without significant consumer price resistance. The regulatory approval pathway for Level 4 autonomous vehicles — requiring demonstrated safety equivalency to human driving across the full operational design domain — remains undefined in most jurisdictions, creating commercial uncertainty that is delaying fleet deployment of fully autonomous vehicles beyond the specific urban geofenced corridors where robotaxi services currently operate. The China-US geopolitical dimension creates procurement restrictions: US OEMs are facing pressure to reduce or eliminate Hesai and other Chinese LiDAR suppliers from their ADAS supply chains due to national security procurement guidance, disrupting established cost-competitive supply relationships.

The Investment Case: Bull, Bear, and What Decides It

The bull case projects the autonomous vehicle sensor market growing with overall ADAS content per vehicle — regulatory mandates are increasing sensor content from approximately USD 300 per vehicle average today to USD 800–1,200 per vehicle by 2030 — combined with Level 3–4 autonomous feature adoption in the premium and commercial vehicle segments. At 100 million annual new vehicle production globally, a USD 1,000 average sensor content implies a USD 100 billion addressable market by 2030, before accounting for aftermarket, robotaxi fleet, and commercial vehicle segments that command much higher per-vehicle sensor content.

The bear case observes that sensor hardware is commoditising faster than the bull case assumes — Chinese LiDAR vendors are compressing pricing at rates that eliminate the margin advantage of Western sensor companies even as the market grows. If Tesla's camera-only FSD approach demonstrates Level 4 capability before LiDAR-equipped competitors, it will validate a sensor architecture that eliminates LiDAR as a mandatory component, removing the highest-value sensor category from the bill of materials. The decisive variable is the Level 4 regulatory approval timeline — each year of delay reduces the TAM for premium sensor suites and extends the period where commoditised ADAS sensors dominate market economics.

Where the Next USD Billion Is Being Built

4D imaging radar is the fastest-growing sensor subsegment — new FMCW-based radar from Arbe Robotics, Vayyar, and Uhnder approaches LiDAR-class spatial resolution while maintaining radar's all-weather performance advantage at a fraction of LiDAR's cost, potentially making it the sensor that expands Level 3 ADAS deployment to mid-market vehicles. Sensor fusion middleware — the software platforms that integrate multi-modal sensor data into a coherent 3D scene representation for vehicle control — is capturing an increasing share of system value as hardware commoditises; companies including Apex.AI, Autoware Foundation, and Mobileye's software stack are building the abstraction layer that allows OEMs to swap sensor hardware without redesigning the perception pipeline. In-cabin sensing for driver monitoring and occupant safety — using near-infrared cameras and radar to monitor driver attention, detect drowsiness, and classify occupants — is a mandatory market driven by EU GSR2 and NCAP requirements growing to USD 3–4 billion by 2028.

Market at a Glance

| Parameter | Details |

|---|---|

| Market Size 2024 | USD 2.8 billion |

| Market Size 2034 | USD 26.7 billion |

| Growth Rate | 27.6% CAGR (2026–2034) |

| Most Critical Decision Factor | Technology maturity and regulatory readiness |

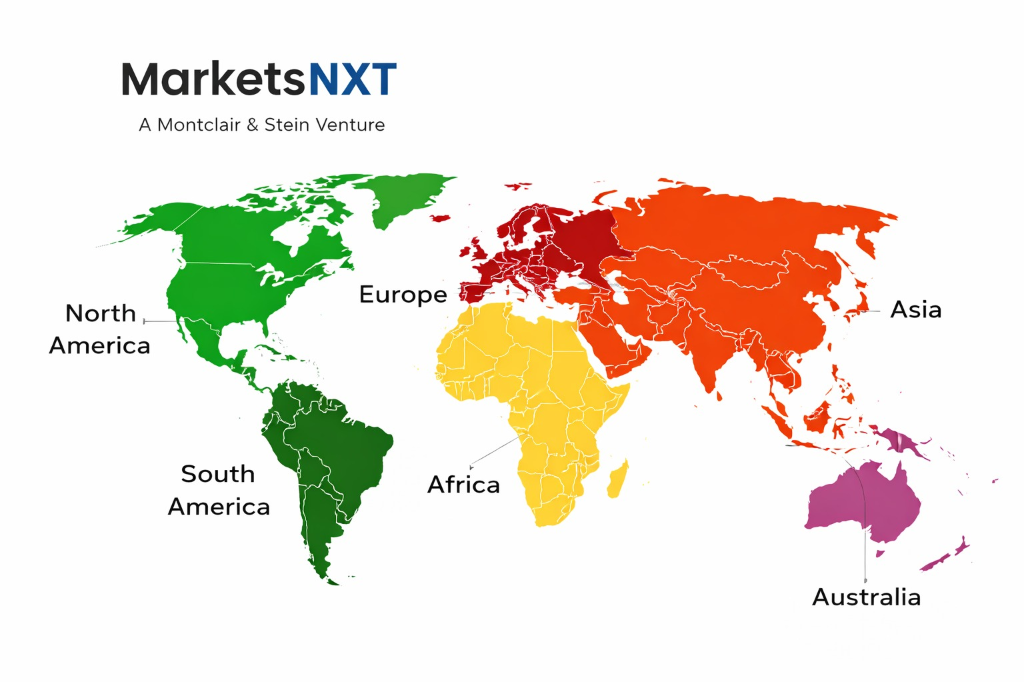

| Largest Region | Asia-Pacific |

| Competitive Structure | Fragmented — multiple platform and specialist players |

Regional Intelligence

China is the largest and fastest-growing autonomous vehicle sensor market, driven by the world's highest EV adoption rate (NEV market share exceeding 35% of new vehicle sales), mandatory ADAS content requirements for new vehicle type approvals, and the domestic robotaxi ecosystems of Baidu Apollo Go, Pony.ai, and WeRide deploying at increasing scale in Tier 1 cities. Chinese LiDAR manufacturers — Hesai, Robosense, Innovusion, Livox (DJI) — are global cost leaders and are supplying both domestic OEMs and select international programmes. North America drives technology innovation, regulatory framework development, and premium-segment autonomous feature deployment, with California, Texas, and Arizona serving as primary robotaxi and autonomous trucking commercial operation geographies. Europe's market is regulatory-driven, with EU GSR2 ADAS mandates creating predictable volume growth for camera and radar content across all vehicle segments and premium OEMs (Mercedes-Benz, BMW, Audi) deploying Level 3 highway automation using high-sensor-content suites.

Leading Market Participants

- Mobileye

- Hesai Technology

- Luminar Technologies

- Valeo

- Innoviz Technologies

Long-Term Market Perspective

By 2034, the autonomous vehicle sensor market will have bifurcated: a commoditised ADAS sensor segment serving regulatory-mandated features at USD 100–400 per vehicle in the mass-market segment, and a premium autonomous sensor suite market serving Level 3–4 vehicles in the USD 500–3,000 per vehicle range. The value concentration will have shifted from sensor hardware to sensor fusion AI software and training data — companies owning the perception AI stack and training datasets from 100 billion+ km of real-world driving will command software licensing revenue that exceeds the hardware margin in the long term. The regulatory approval of Level 4 autonomous commercial vehicles — trucks, robotaxis, delivery robots — will be the event that triggers the next major sensor market growth cycle, expected in the 2027–2030 timeframe for leading jurisdictions.

Frequently Asked Questions

Market Segmentation

Table of Contents

Research Framework and Methodological Approach

Information

Procurement

Information

Analysis

Market Formulation

& Validation

Overview of Our Research Process

MarketsNXT follows a structured, multi-stage research framework designed to ensure accuracy, reliability, and strategic relevance of every published study. Our methodology integrates globally accepted research standards with industry best practices in data collection, modeling, verification, and insight generation.

1. Data Acquisition Strategy

Robust data collection is the foundation of our analytical process. MarketsNXT employs a layered sourcing model.

- Company annual reports & SEC filings

- Industry association publications

- Technical journals & white papers

- Government databases (World Bank, OECD)

- Paid commercial databases

- KOL Interviews (CEOs, Marketing Heads)

- Surveys with industry participants

- Distributor & supplier discussions

- End-user feedback loops

- Questionnaires for gap analysis

Analytical Modeling and Insight Development

After collection, datasets are processed and interpreted using multiple analytical techniques to identify baseline market values, demand patterns, growth drivers, constraints, and opportunity clusters.

2. Market Estimation Techniques

MarketsNXT applies multiple estimation pathways to strengthen forecast accuracy.

Bottom-up Approach

Aggregating granular demand data from country level to derive global figures.

Top-down Approach

Breaking down the parent industry market to identify the target serviceable market.

Supply Chain Anchored Forecasting

MarketsNXT integrates value chain intelligence into its forecasting structure to ensure commercial realism and operational alignment.

Supply-Side Evaluation

Revenue and capacity estimates are developed through company financial reviews, product portfolio mapping, benchmarking of competitive positioning, and commercialization tracking.

3. Market Engineering & Validation

Market engineering involves the triangulation of data from multiple sources to minimize errors.

Extensive gathering of raw data.

Statistical regression & trend analysis.

Cross-verification with experts.

Publication of market study.

Client-Centric Research Delivery

MarketsNXT positions research delivery as a collaborative engagement rather than a static information transfer. Analysts work with clients to clarify objectives, interpret findings, and connect insights to strategic decisions.