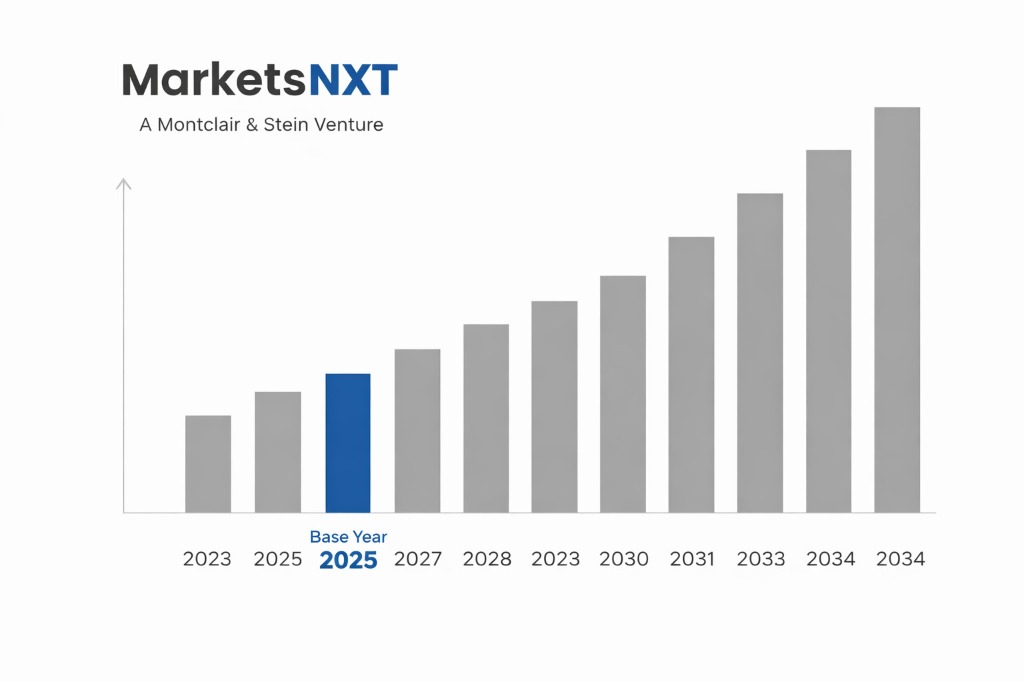

Agentic AI Infrastructure and Orchestration Platform Market Size, Share & Forecast 2026–2034

Report Highlights

- ✓Market Size 2024: USD 3.4 billion

- ✓Market Size 2034: USD 47.2 billion

- ✓CAGR: 32.0%

- ✓Market Definition: Software platforms, orchestration frameworks, memory systems, and tooling infrastructure enabling multi-step AI agent deployment in enterprise environments.

- ✓Leading Companies: Anthropic, Microsoft, Salesforce, Google DeepMind, Amazon Web Services

- ✓Base Year: 2025

- ✓Forecast Period: 2026–2034

Who Controls This Market — And Who Is Threatening That Control

The agentic AI infrastructure market has no single dominant incumbent. Unlike the previous era of enterprise software where one vendor typically controlled a category for a decade before disruption, the agentic AI infrastructure market is being contested simultaneously at three architectural layers — the model layer (which reasoning engine powers the agent), the orchestration layer (how multi-step workflows are planned and executed), and the tool connectivity layer (how agents access external systems and data). Anthropic's release of the Model Context Protocol in November 2024 was a significant attempt to establish connectivity standards that extend the reach of Claude-based agents, and its rapid adoption by over 1,200 tool providers by mid-2025 gives it a network effects position in the connectivity layer that competing model providers are scrambling to match. Microsoft's Copilot Studio, embedded within the Office 365 and Azure ecosystem used by over 300 million enterprise users, has the distribution advantage that no startup or specialist AI vendor can replicate — the question is whether enterprise users prefer the convenience of platform-native agents over the capability of best-of-breed specialist deployments.

The threat to established positions comes from three directions. First, open-source orchestration frameworks are preventing any single vendor from owning the workflow execution layer — enterprises running LangGraph or AutoGen are not dependent on any proprietary orchestration vendor's pricing or roadmap. Second, the rapid capability improvement of open-weight models (Llama 3.3, Mistral Large, Qwen 2.5) is eroding the model quality moat that justified proprietary API dependency for cost-sensitive, high-volume agent deployments. Third, vertical specialist vendors — Harvey AI (legal), Observe AI (contact centre), Cohere (enterprise search and knowledge agents) — are demonstrating that domain-specific agents with curated tool integrations and compliance frameworks outperform general-purpose agents in regulated industries, potentially fragmenting the enterprise market in ways that favour specialists over platforms.

Industry Snapshot

The agentic AI infrastructure market encompasses four functional segments: model providers supplying the reasoning backbone for agent systems (Anthropic, OpenAI, Google, Cohere), orchestration platforms managing multi-agent workflow execution (Microsoft Copilot Studio, Salesforce Agentforce, ServiceNow AI Agents, LangChain), memory and knowledge management infrastructure (Pinecone, Weaviate, Chroma, Zilliz), and agent security and observability tooling (Protect AI, Robust Intelligence, Arize AI, Langfuse). The market is growing at the intersection of three converging enterprise priorities: operational cost reduction (agents replacing human execution in defined workflow categories), software development velocity (code agents accelerating development cycles), and process quality improvement (agents applying consistent decision logic at volumes that human teams cannot sustain). Enterprise spending on agentic AI infrastructure is concentrated in financial services, technology, healthcare, and retail — sectors with high transaction volumes, structured workflow definitions, and sufficient IT sophistication to manage agent governance requirements.

The Forces Accelerating Demand Right Now

Labour cost pressure is the primary commercial driver of agentic AI adoption. In developed market economies, the cost of knowledge worker labour — customer service agents, junior analysts, software developers, compliance reviewers — has grown at 4%–7% annually since 2020 while the capability and reliability of AI agent systems has improved at a rate that makes substitution economically compelling for defined workflow categories. The unit economics are stark: a well-configured customer service agent costs USD 0.10–0.50 per interaction versus USD 3–15 for human agent handling, at comparable or superior resolution rates for structured query types. The MCP ecosystem maturation is a second accelerant — the collapse of integration overhead from months of custom API development to days of MCP server configuration has removed the most significant deployment barrier for enterprises lacking large AI engineering teams. The third driver is competitive pressure from early adopters: financial services and technology companies that deployed production agent systems in 2024–2025 are reporting 25%–40% reductions in operational costs for agent-amenable workflow categories, creating board-level urgency for competitors to match their cost structures.

What Is Holding This Market Back

Enterprise agent deployment faces three material constraints that are slowing adoption below the pace that capability development alone would suggest. Trust and reliability concerns are the primary barrier — agents that can take irreversible actions in external systems must demonstrate a reliability threshold that current systems do not consistently achieve for complex, multi-step workflows involving ambiguous decision points. Prompt injection vulnerabilities — where malicious content in documents or web pages redirects agent behaviour — are a genuine security threat for agents browsing the web or processing untrusted documents, without complete mitigation as of 2025. Governance frameworks are the second constraint: most enterprise risk and compliance functions were not designed to audit, monitor, or manage systems that take autonomous actions, and building the oversight infrastructure for agent governance adds implementation overhead that extends deployment timelines. Finally, the skills gap in agent prompt engineering, evaluation, and governance creates a capacity constraint for enterprises without existing AI engineering teams — a gap that is being addressed by consulting firms and system integrators but not yet at the scale the market requires.

The Investment Case: Bull, Bear, and What Decides It

The bull case rests on the magnitude of the addressable labour substitution opportunity: global knowledge worker labour costs exceed USD 25 trillion annually, and even a 5% substitution rate for agent-amenable workflow categories at current agent economics represents a USD 1.25 trillion annual value creation event. At a 10× revenue multiple — conservative for infrastructure businesses with recurring revenue and switching costs — this implies a market capitalisation creation event of USD 12.5 trillion across the companies that capture it, of which the infrastructure vendors providing orchestration, connectivity, and memory tools capture a portion through platform fees and API revenue.

The bear case centres on commoditisation: if orchestration frameworks are open-source, models are becoming open-weight, and tool connectivity is standardised through MCP, the infrastructure layer may commoditise before the market leaders have built the switching costs and data advantages needed to sustain premium pricing. The bear case resolution depends on whether enterprise agent deployments generate proprietary workflow data and integration depth that creates switching costs analogous to those that CRM and ERP vendors have historically maintained — the most durable infrastructure businesses are those whose product improves the more it is used, and the question is whether agentic AI platforms demonstrate this property at enterprise scale.

Where the Next USD Billion Is Being Built

Agent security and observability is the fastest-growing subsegment — the governance gap in current enterprise deployments creates USD 3–5 billion of addressable demand for systems that audit agent actions, detect anomalous behaviour, and enforce permission boundaries. Agent evaluation tooling — systematic frameworks for measuring agent performance, reliability, and safety across workflow categories — is the second high-growth area, with early leaders including Braintrust, Langfuse, and Arize AI capturing initial enterprise budgets from AI teams building production agent systems. Vertical agent platforms in regulated industries — legal, financial services, healthcare, pharmaceutical — are building compliance-native agent deployments that command significant pricing premiums over general-purpose infrastructure and have natural switching costs from domain-specific fine-tuning and workflow integration investment.

Market at a Glance

| Parameter | Details |

|---|---|

| Market Size 2024 | USD 3.4 billion |

| Market Size 2034 | USD 47.2 billion |

| Growth Rate | 32.0% CAGR (2026–2034) |

| Most Critical Decision Factor | Technology maturity and regulatory readiness |

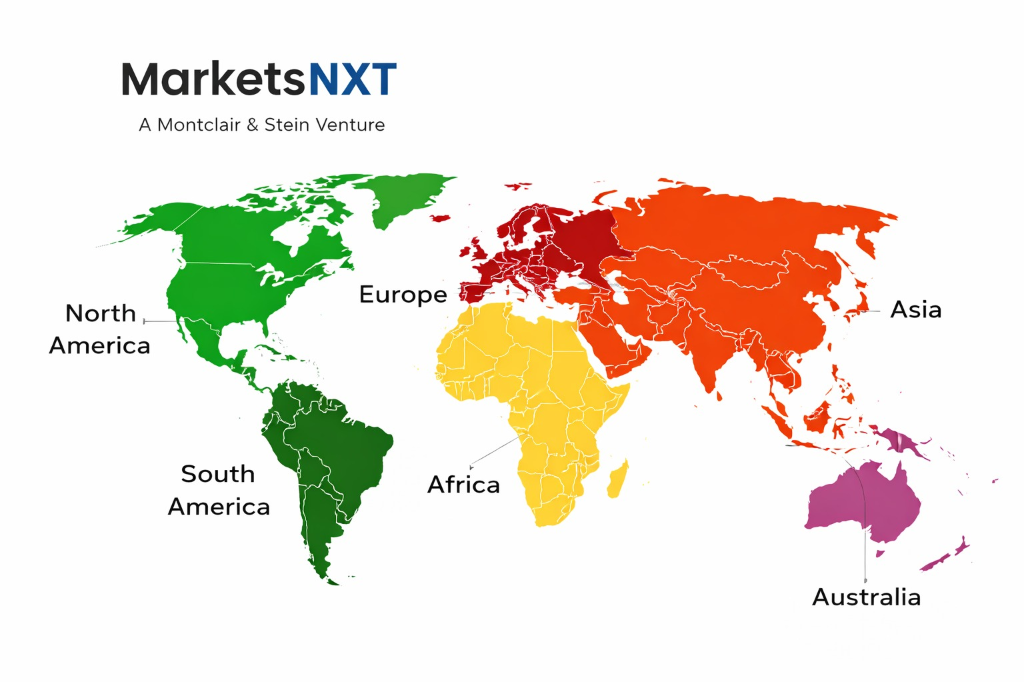

| Largest Region | North America |

| Competitive Structure | Fragmented — multiple platform and specialist players |

Regional Intelligence

North America accounts for approximately 54% of global agentic AI infrastructure spend in 2024, driven by the concentration of hyperscaler providers, enterprise software vendors, and technology-sector enterprise buyers in the US. The US market benefits from the most advanced AI regulatory environment for enterprise deployment — the NIST AI Risk Management Framework and sector-specific guidance from financial regulators provide clearer compliance pathways than most other jurisdictions. Europe is the second-largest region but is growing more slowly due to GDPR data residency requirements that complicate multi-cloud agent deployments and the EU AI Act's classification of certain autonomous agent systems as high-risk applications requiring mandatory human oversight documentation. Asia-Pacific, led by Japan, South Korea, and Singapore, is growing fastest in the 2024–2028 period, driven by acute labour shortages in knowledge worker categories that make the ROI case for agent adoption more compelling than in markets with more flexible labour markets.

Leading Market Participants

- Anthropic leads on model quality and protocol standards

- Microsoft dominates on distribution

- Salesforce Agentforce

- ServiceNow

- UiPath

- Automation Anywhere

Long-Term Market Perspective

The agentic AI infrastructure market will consolidate around platform layers by 2030 — a small number of enterprise platforms will capture the majority of orchestration spend, while the model layer continues to commoditise and the tool connectivity layer standardises around open protocols. The businesses that will sustain premium valuations are those that have accumulated proprietary workflow data, enterprise-specific fine-tuning, and deep integration with mission-critical enterprise systems — the characteristics that create switching costs and justify recurring revenue models. By 2034, agentic AI infrastructure is likely a category within larger enterprise software platforms rather than a standalone market, as orchestration, memory, and tool connectivity are absorbed into the product suites of the major enterprise software vendors who have the distribution to reach the full addressable market.

Frequently Asked Questions

Market Segmentation

Table of Contents

Research Framework and Methodological Approach

Information

Procurement

Information

Analysis

Market Formulation

& Validation

Overview of Our Research Process

MarketsNXT follows a structured, multi-stage research framework designed to ensure accuracy, reliability, and strategic relevance of every published study. Our methodology integrates globally accepted research standards with industry best practices in data collection, modeling, verification, and insight generation.

1. Data Acquisition Strategy

Robust data collection is the foundation of our analytical process. MarketsNXT employs a layered sourcing model.

- Company annual reports & SEC filings

- Industry association publications

- Technical journals & white papers

- Government databases (World Bank, OECD)

- Paid commercial databases

- KOL Interviews (CEOs, Marketing Heads)

- Surveys with industry participants

- Distributor & supplier discussions

- End-user feedback loops

- Questionnaires for gap analysis

Analytical Modeling and Insight Development

After collection, datasets are processed and interpreted using multiple analytical techniques to identify baseline market values, demand patterns, growth drivers, constraints, and opportunity clusters.

2. Market Estimation Techniques

MarketsNXT applies multiple estimation pathways to strengthen forecast accuracy.

Bottom-up Approach

Aggregating granular demand data from country level to derive global figures.

Top-down Approach

Breaking down the parent industry market to identify the target serviceable market.

Supply Chain Anchored Forecasting

MarketsNXT integrates value chain intelligence into its forecasting structure to ensure commercial realism and operational alignment.

Supply-Side Evaluation

Revenue and capacity estimates are developed through company financial reviews, product portfolio mapping, benchmarking of competitive positioning, and commercialization tracking.

3. Market Engineering & Validation

Market engineering involves the triangulation of data from multiple sources to minimize errors.

Extensive gathering of raw data.

Statistical regression & trend analysis.

Cross-verification with experts.

Publication of market study.

Client-Centric Research Delivery

MarketsNXT positions research delivery as a collaborative engagement rather than a static information transfer. Analysts work with clients to clarify objectives, interpret findings, and connect insights to strategic decisions.