AI Glasses (Smart Eyewear) Market — Global Strategic Analysis, Key Decisions, and Forecast 2026–2034

Report Highlights

- ✓Market Size 2024: Approximately USD 3.2 billion

- ✓Market Size 2034: Approximately USD 28.6 billion

- ✓CAGR Range: 24.4%–27.9%

- ✓Market Definition: AI glasses and smart eyewear are wearable optical devices integrating augmented reality displays, AI inference processors, cameras, microphones, and connectivity into spectacle or sunglass form factors — enabling hands-free visual overlay, real-time AI assistance, spatial computing, and ambient sensing in consumer, enterprise, and industrial applications

- ✓Top 3 Critical Questions: Has Meta Ray-Ban's commercial success validated consumer mass-market smart eyewear or only a narrow social use case? Which hardware breakthrough — display optics, battery density, or on-device inference — determines when AR smart glasses achieve mass-market form factors? Does enterprise industrial AR (currently the most commercially mature segment) get disrupted by consumer platforms achieving comparable capability at one-tenth the cost?

- ✓First 5 Companies: Meta (Ray-Ban Meta), Apple, Google, Microsoft (HoloLens), Snap

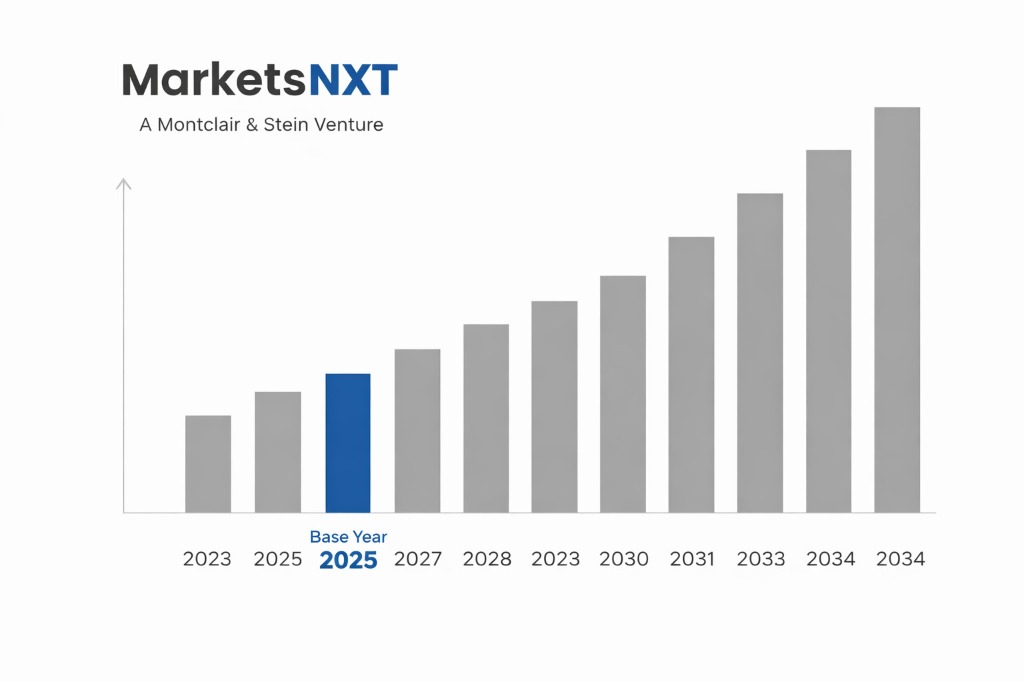

- ✓Base Year: 2025

- ✓Forecast Period: 2026–2034

- ✓Key Decision Point: Apple Vision Pro's successor product form factor — specifically whether Apple pursues an eyeglass form factor in the 2026–2027 product cycle — is the single most consequential commercial decision for the smart eyewear market, as Apple's supply chain commitments would validate the waveguide display component ecosystem currently dominated by fragile single-source suppliers

Industry Snapshot

The AI Glasses (Smart Eyewear) market was valued at approximately USD 3.2 billion in 2024 and is projected to reach approximately USD 28.6 billion by 2034, growing at a CAGR of 24.4%–27.9% over the forecast period. The market is in an early growth stage, with consumer smart eyewear transitioning from novelty to utility following Meta's Ray-Ban Meta success — shipping an estimated 2 million units in 2024 — and enterprise AR continuing as the commercially mature segment anchored by industrial use cases with documented ROI. The past 3 years have fundamentally changed the market's strategic context: Meta's decision to abandon the VR-first spatial computing strategy for an AI assistant-first camera glasses strategy in 2023 proved commercially prescient, Apple's Vision Pro launch validated premium spatial computing but at USD 3,499 price points incompatible with mass-market eyewear, and the waveguide display technology required for true AR overlay has advanced from laboratory demonstration to limited commercial production at companies including WaveOptics (acquired by Snap) and Lumus.

The strategic context for the 2026–2034 forecast period is a race between two architectures: AI camera glasses without true AR display (Meta Ray-Ban model — commercially proven, mass-market pricing achievable, no waveguide required) and full AR glasses with holographic display overlay (Apple, Google, Microsoft — commercially aspirational, waveguide display bottleneck limits form factor). The winner-takes-most dynamics will depend on whether waveguide display optics achieve the dual milestones of daylight-visible brightness and sub-8mm thickness required for socially acceptable eyewear form factors before 2028.

Before You Commit Capital: The Questions That Must Be Answered

Has Meta Ray-Ban's commercial success validated the mass-market smart eyewear category or only a narrow social sharing use case?

Meta Ray-Ban's approximately 2 million units in 2024 validates that consumers will pay USD 299–349 for AI assistant and camera functionality in socially acceptable eyewear form — a meaningful proof point. However, the use cases driving adoption (photo and video capture, music streaming, Meta AI voice assistant) are not the same use cases that justify the USD 10–30 billion market projections anchored on spatial computing and AR overlay. The mass market validation extends to camera-AI eyewear; the AR overlay opportunity remains unvalidated at mass-market price points.

Which hardware breakthrough has the longest lead time and most constrains the full AR glasses roadmap?

Waveguide display optics — specifically achieving daylight-visible brightness above 10,000 nits at sub-8mm waveguide thickness with acceptable field of view — is the binding constraint with the longest development timeline. Current commercial waveguides from Lumus and WaveOptics achieve either brightness or thinness but not both simultaneously at production yields above 40%. Microsoft HoloLens 2 uses 47mm-thick waveguides incompatible with eyeglass form factors. Solving this constraint requires materials science advances in diffractive optics that industry timelines estimate at 3–5 years from current demonstrated capability.

Is enterprise industrial AR vulnerable to disruption from consumer platforms achieving comparable capability at lower cost?

Partially. Industrial AR platforms (RealWear, Vuzix, Honeywell) command USD 1,500–5,000 per device and serve documented enterprise ROI use cases in manufacturing maintenance, field service, and warehousing. Consumer platforms achieving comparable hands-free AI assistance capability at USD 299–599 would disrupt the lower end of enterprise AR — lightweight field service and warehouse picking use cases. High-end industrial AR requiring MIL-SPEC durability, hazardous location certification, and enterprise security frameworks is structurally protected from consumer platform disruption through 2030.

What is the realistic battery life barrier to full-day smart eyewear use, and when does it get solved?

Current AI glasses achieving active camera, AI inference, and connectivity use achieve 3–6 hours of mixed use before requiring charging. Full-day all-day wearable use — the threshold required for behaviour change and habitual adoption — requires 8–12 hours of mixed active/passive use. Battery energy density improvements of approximately 15%–20% annually combined with on-device inference efficiency improvements from dedicated neural processing silicon suggest full-day battery life is achievable by 2027–2028 in mass-market form factors.

How does the regulatory environment for always-on camera eyewear affect consumer adoption in key markets?

Significant friction exists. Germany's BDSG and France's RGPD interpretations require affirmative consent from individuals photographed or recorded by smart eyewear in public — creating legal uncertainty for always-on camera glasses in European public spaces. Several US states have introduced legislation requiring visible recording indicators on smart eyewear. Social norms barriers — the "Glasshole" effect documented during Google Glass's 2013–2014 failure — remain an adoption risk that Meta has partially addressed through the Ray-Ban brand's fashion credibility, but the regulatory uncertainty adds commercial risk for EU market deployment.

The Drivers That Create Entry Windows

For market entrants, the most significant near-term driver is the Meta Ray-Ban platform ecosystem opening — Meta's announcement in 2024 of third-party developer APIs for Ray-Ban Meta creating an app ecosystem opportunity for software companies targeting the installed base of 3+ million Ray-Ban Meta users. Enterprise software vendors building workflow applications for AI camera glasses — remote assistance, documentation, quality inspection — have a first-mover window in 2025–2026 before Meta's own first-party applications saturate the obvious use cases. For existing players, the tailwind is component maturation: Qualcomm's Snapdragon AR2 Gen 1 chip, purpose-built for eyewear power envelopes at 1.7W TDP, enables on-device AI inference that previous smart eyewear generations had to offload to paired smartphones, fundamentally improving standalone capability.

The most significant entry window created by regulatory tailwinds is industrial AR in EU-regulated manufacturing environments. EU machinery safety regulations (Machinery Regulation 2023/1230, effective January 2027) create mandatory digital work instruction requirements for complex assembly operations that smart eyewear is uniquely positioned to deliver. Airbus's documented 25% assembly time reduction using AR-assisted assembly procedures at its Hamburg facility has been cited in EU manufacturing productivity programs as a model for industrial AR deployment — creating a policy-supported demand driver for industrial smart eyewear that consumer platform disruption does not threaten.

The Barriers That Determine Who Can Compete

The barrier most affecting new entrants at the consumer tier is the fashion and brand legitimacy requirement that hardware capability alone cannot provide. Meta's Ray-Ban partnership took 3+ years of co-development and required Ray-Ban's established fashion brand to provide social acceptability that Google Glass lacked despite superior technology. Any consumer smart eyewear entrant without an established eyewear brand partnership — or the ability to create a new fashion-credible brand — faces a near-insurmountable perception barrier regardless of technical specification.

The barrier most affecting enterprise AR competitors is the integration cost of enterprise workflows. Industrial AR delivers ROI when deeply integrated with ERP systems (SAP, Oracle), maintenance management systems (IBM Maximo, SAP PM), and quality management systems — integrations requiring 6–18 months of professional services per enterprise customer. Platform vendors with pre-built integrations (PTC Vuforia, Scope AR, Upskill) have accumulated integration libraries that represent 3–5 years of enterprise customer co-development — an asset that new entrants cannot acquire through capital investment alone.

Market at a Glance

| Parameter | Details |

|---|---|

| Market Size 2025 | Approximately USD 4.0 billion |

| Market Size 2034 | Approximately USD 28.6 billion |

| Growth Rate | 24.4%–27.9% CAGR |

| Most Critical Decision Factor | Apple eyeglass form factor product decision; waveguide display optics commercialisation timeline |

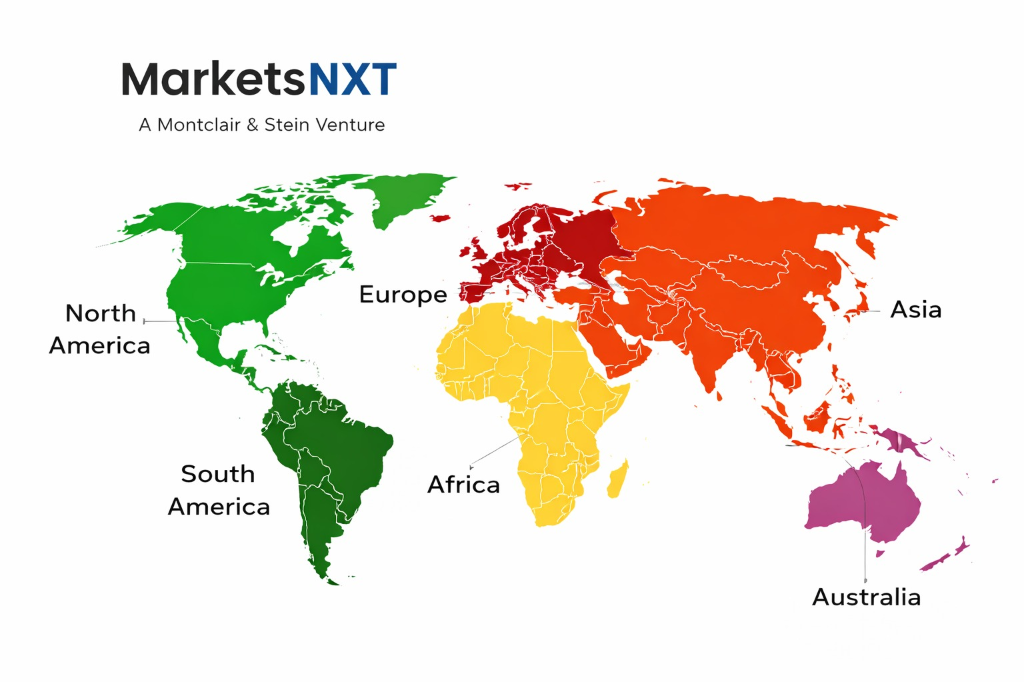

| Largest Region | North America (approximately 46% of revenue) |

| Competitive Structure | Consumer tier: Meta dominant; Enterprise tier: fragmented with PTC, RealWear, Vuzix leading |

| Segments Covered | Consumer AI Camera Glasses, Full AR Smart Glasses, Industrial and Enterprise AR, Healthcare AR |

Where to Enter, Where to Watch, Where to Wait

North America is the primary strategic entry point for both consumer and enterprise segments. US consumers represent approximately 40%–45% of global consumer smart eyewear revenue, with the highest early adopter concentration and the most developed developer ecosystem for smart eyewear applications. Enterprise industrial AR entry in North America benefits from the largest manufacturing sector actively piloting AR-assisted operations (Boeing, GE Aviation, Lockheed Martin all have active enterprise AR programs) and the deepest venture capital ecosystem supporting AR enterprise software startups. Enterprise software developers targeting the Ray-Ban Meta platform should launch US-first given Meta's strongest retail distribution and AI assistant capability in English language markets.

Asia Pacific is the watch market — specifically Japan and South Korea, where enterprise AR adoption in electronics manufacturing (Sony, Samsung, LG Display) is growing at 18%–24% annually and where government manufacturing competitiveness programs actively support digital factory technology adoption. Europe is the most complex market entry: industrial AR has the strongest policy tailwind from EU manufacturing digitalisation programs, but consumer camera glasses face the most developed regulatory friction from GDPR and emerging recording consent legislation. Latin America and Middle East should be planned for entry only after North American market position is established.

Who Is Winning, Who Is Vulnerable, and Why

Meta is winning the consumer camera glasses segment definitively through the Ray-Ban partnership, fashion-credible design, and aggressive AI assistant integration that makes the product genuinely useful for everyday tasks. Meta's installed base of 3+ million units creates a platform flywheel — developer investment in Meta's platform attracts more users, creating a widening moat versus hardware-only competitors. Microsoft HoloLens 2 is simultaneously the most capable full AR platform commercially available and the most commercially vulnerable — HoloLens program restructuring in 2023 reduced Microsoft's commitment to the platform, creating customer uncertainty that competitors are actively exploiting in enterprise sales processes.

The competitive vulnerability most significant for the market overall is the absence of Apple from the consumer smart eyewear category. Apple's supply chain commitments — when they materialise — will validate waveguide display component suppliers, create a mass-market consumer AR category, and force all competitors to respond to Apple's design and UX standards. The player best positioned to exploit the pre-Apple window is Meta, whose Ray-Ban Meta ecosystem will have 4–5 years of developer and user base accumulation before Apple's eyeglass product reaches commercial scale.

Leading Market Participants

- Meta (Ray-Ban Meta)

- Microsoft (HoloLens 2)

- Apple (Vision Pro, future eyeglass form factor)

- Google (AR enterprise platform)

- Snap (Spectacles AR)

- RealWear (industrial AR headsets)

- Vuzix (enterprise smart glasses)

- PTC (Vuforia enterprise AR platform)

- Magic Leap (enterprise AR)

- Rokid (consumer and enterprise AR, China)

Long-Term Market Perspective

Two scenarios determine the market's 2034 revenue range. The base case — approximately 60% probability — involves waveguide display optics achieving mass-market form factors by 2028, Apple launching an eyeglass product in 2027–2028, and the consumer AR overlay category reaching meaningful scale. This scenario supports the USD 28.6 billion market size. The downside case — approximately 40% probability — involves waveguide display delays pushing full AR to 2030+, with the market growing more modestly to USD 16–20 billion driven by camera-AI glasses without true AR overlay. The upside scenario — Apple launches earlier, waveguide breakthrough arrives by 2026–2027 — supports USD 38–45 billion by 2034.

Capital investment priorities through 2034 are waveguide display optics (the binding hardware constraint), on-device AI inference silicon purpose-built for eyewear power envelopes, and enterprise workflow application development for established platforms. The trend most underweighted in mainstream analysis is the potential for AI camera glasses without AR overlay to capture a significantly larger market share than full AR glasses through 2030 — Meta's success suggests that ambient AI assistance and social documentation are consumer use cases with mass-market appeal that do not require holographic display, contradicting the assumption that full AR overlay is required for mainstream smart eyewear adoption.

Frequently Asked Questions

Why did Google Glass fail in 2013–2015 and what has changed to make smart eyewear viable now?

Google Glass failed due to four compounding factors: socially unacceptable design with visible camera indicator creating privacy anxiety, battery life of under 2 hours, limited useful applications, and USD 1,500 consumer price point. What has changed: Meta's Ray-Ban partnership solved the design problem through established fashion brand credibility; Qualcomm's Snapdragon AR2 solved the battery efficiency problem; AI assistant integration created a compelling daily use case; and USD 299–349 pricing achieved consumer mass-market accessibility.

What is the difference between AR smart glasses and VR headsets like Meta Quest?

VR headsets replace the user's visual field with a fully rendered digital environment — requiring the user to stop interacting with the physical world. AR smart glasses overlay digital content onto the user's natural view of the physical world, enabling simultaneous interaction with digital information and physical environment. This distinction makes AR glasses suitable for continuous all-day wear during normal activities, while VR headsets are used for discrete immersive sessions. The form factor requirement — AR glasses must be lightweight and socially wearable — is the primary technical challenge separating current consumer AR from mass-market adoption.

How significant is the enterprise AR market versus consumer smart eyewear in current revenue terms?

Enterprise and industrial AR accounts for approximately 62%–68% of current total smart eyewear revenue despite having a much smaller unit volume than consumer devices, due to enterprise device ASPs of USD 1,500–5,000 versus consumer device ASPs of USD 299–599. Enterprise AR is the commercially mature, revenue-generating segment today. Consumer camera glasses (Meta Ray-Ban) are growing faster in unit volume but at lower ASPs. The market's long-term revenue growth is contingent on consumer full AR smart glasses — the highest-ASP consumer segment — achieving mass-market form factors.

What privacy regulations apply to always-on camera smart glasses and how do they vary by market?

The US has no federal law specifically governing smart eyewear cameras in public spaces, though several states (Illinois, Texas) have biometric data laws that apply to facial recognition functions. The EU's GDPR requires affirmative consent for identifiable image capture of individuals, creating legal ambiguity for always-on camera glasses in public. Germany's BDSG is the most restrictive, effectively requiring explicit consent for any recording of third parties. Manufacturers have responded with LED recording indicators — required by Meta on Ray-Ban Meta and mandated by UK ICO guidance.

Which enterprise use cases currently deliver the strongest documented ROI from smart eyewear deployment?

Remote expert assistance — field technicians receiving live visual guidance from expert engineers — delivers the most consistently documented ROI, with enterprises reporting 25%–40% reduction in mean time to repair and 15%–30% reduction in unnecessary site visits. Hands-free work instruction for complex assembly tasks delivers 15%–25% assembly time reduction, documented at Airbus, Boeing, and GE Aviation. Warehouse picking accuracy improvement — 99%+ versus 96%–98% for paper-based picking — delivers measurable cost savings in high-volume distribution centres where error cost per incident is USD 35–125.

Market Segmentation

- AI Camera and Assistant Glasses (No Display)

- Full Augmented Reality Smart Glasses (Waveguide Display)

- Industrial and Enterprise AR Headsets

- Others (Healthcare AR, Sports and Fitness AR)

- Consumer Lifestyle and Social

- Industrial Manufacturing and Field Service

- Healthcare and Medical Imaging

- Logistics and Warehousing

- Defence and Public Safety

- North America

- Europe

- Asia Pacific

- Latin America

- Middle East and Africa

- Consumer Retail and E-commerce (Direct and Partner)

- Enterprise Direct Sales and System Integrators

- Telecom Operator Bundling

- Healthcare and Industrial OEM Supply

Table of Contents

Research Framework and Methodological Approach

Information

Procurement

Information

Analysis

Market Formulation

& Validation

Overview of Our Research Process

MarketsNXT follows a structured, multi-stage research framework designed to ensure accuracy, reliability, and strategic relevance of every published study. Our methodology integrates globally accepted research standards with industry best practices in data collection, modeling, verification, and insight generation.

1. Data Acquisition Strategy

Robust data collection is the foundation of our analytical process. MarketsNXT employs a layered sourcing model.

- Company annual reports & SEC filings

- Industry association publications

- Technical journals & white papers

- Government databases (World Bank, OECD)

- Paid commercial databases

- KOL Interviews (CEOs, Marketing Heads)

- Surveys with industry participants

- Distributor & supplier discussions

- End-user feedback loops

- Questionnaires for gap analysis

Analytical Modeling and Insight Development

After collection, datasets are processed and interpreted using multiple analytical techniques to identify baseline market values, demand patterns, growth drivers, constraints, and opportunity clusters.

2. Market Estimation Techniques

MarketsNXT applies multiple estimation pathways to strengthen forecast accuracy.

Bottom-up Approach

Aggregating granular demand data from country level to derive global figures.

Top-down Approach

Breaking down the parent industry market to identify the target serviceable market.

Supply Chain Anchored Forecasting

MarketsNXT integrates value chain intelligence into its forecasting structure to ensure commercial realism and operational alignment.

Supply-Side Evaluation

Revenue and capacity estimates are developed through company financial reviews, product portfolio mapping, benchmarking of competitive positioning, and commercialization tracking.

3. Market Engineering & Validation

Market engineering involves the triangulation of data from multiple sources to minimize errors.

Extensive gathering of raw data.

Statistical regression & trend analysis.

Cross-verification with experts.

Publication of market study.

Client-Centric Research Delivery

MarketsNXT positions research delivery as a collaborative engagement rather than a static information transfer. Analysts work with clients to clarify objectives, interpret findings, and connect insights to strategic decisions.