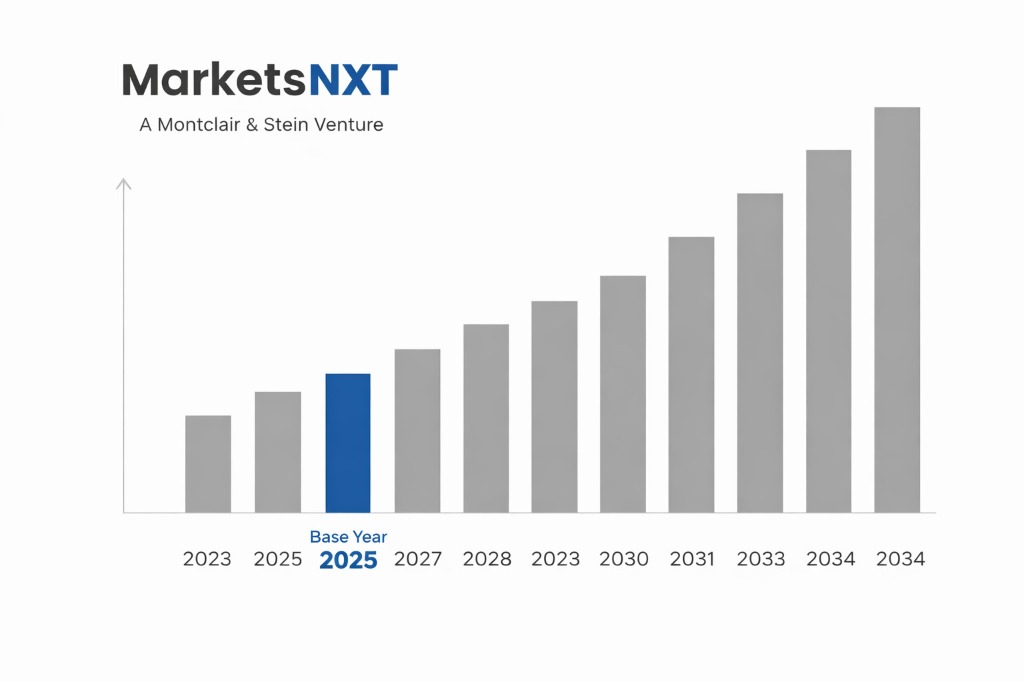

AI Semiconductor and Accelerator Chip Market Size, Share & Forecast 2026–2034

Report Highlights

- ✓Market Size 2024: USD 43.7 billion

- ✓Market Size 2034: USD 268.6 billion

- ✓CAGR: 21.6%

- ✓Market Definition: Specialised semiconductor chips designed for AI training and inference workloads, including graphics processing units, tensor processing units, neural processing units, and custom AI ASICs for data centre, edge, and embedded AI applications.

- ✓Leading Companies: Nvidia, AMD, Google, Amazon, Microsoft

- ✓Base Year: 2025

- ✓Forecast Period: 2026–2034

Who Controls This Market — And Who Is Threatening That Control

Nvidia's control of the AI chip market is the most commercially dominant position any semiconductor company has held since Intel's x86 CPU monopoly in the 1990s. Its H100 and H200 GPUs — priced at USD 30,000–40,000 per unit — generated approximately USD 90 billion in data centre revenue for fiscal year 2025, and the Blackwell architecture (B100, B200, GB200) launched in 2024 extended its performance lead. The CUDA software ecosystem — 4 million developers, 800 optimised libraries, 20 years of accumulated software optimisation — is the real moat, not the hardware. Switching from Nvidia GPUs requires re-writing or recompiling the AI training and inference software that organisations have built on CUDA-specific kernels and memory management primitives, a transition that takes 12–24 months for large research and enterprise teams and is not undertaken lightly even when alternative hardware offers comparable performance.

The threats to Nvidia's position are real but operating on different timescales. Google's TPU v5 and v5p accelerators, deployed exclusively within Google Cloud, give GCP the most competitive AI training economics in the hyperscaler market — Google's internal AI research runs on TPUs, not Nvidia GPUs, and the cost differential is significant. Amazon's Trainium 2 chips are powering AWS's AI training instances at a claimed 4× better price-performance than comparable Nvidia GPU instances. Microsoft's Maia 100 chip, deployed in Azure for inference workloads, is the first indication that Microsoft is serious about reducing Nvidia GPU dependency even as it simultaneously spends billions on Nvidia hardware. Intel's Gaudi 3, while still behind Nvidia on absolute performance, is priced aggressively and supported by Intel's developer ecosystem investment, and represents the most credible x86-ecosystem alternative for AI workloads.

Industry Snapshot

AI semiconductors is the fastest-growing segment in the global semiconductor industry, growing from approximately USD 19 billion in 2021 to USD 48.6 billion in 2024 — a 156% increase in three years driven by the explosive growth of large language model training and inference at hyperscaler scale. The market encompasses three primary product categories: training chips (used to optimise AI model weights from large datasets — computationally intensive, memory-bandwidth intensive, requiring the largest and most expensive chips), inference chips (used to run trained models for production application serving — more price-performance sensitive, requiring lower precision arithmetic and higher throughput per watt), and edge AI chips (embedded in devices — smartphones, cameras, vehicles, IoT sensors — for on-device AI inference). Training is dominated by Nvidia GPUs and hyperscaler custom silicon; inference is more fragmented with Nvidia, Qualcomm, AMD, and specialised inference processors competing across cloud, edge, and device deployment contexts.

The Forces Accelerating Demand Right Now

Large language model scale is the primary driver — each generation of frontier AI model requires exponentially more compute than its predecessor. GPT-4 required approximately 2× the compute of GPT-3; GPT-5-class models require 10–50× GPT-4 levels. The training runs for next-generation models from Anthropic, OpenAI, Google, and Meta are consuming compute clusters of 100,000+ H100-equivalent GPUs simultaneously, driving sustained demand at price points where Nvidia GPU supply remains below demand. The agentic AI deployment wave is driving inference demand growth even faster than training — agents running continuously in enterprise workflows consume GPU inference capacity 24/7 at volumes that the copilot generation did not require. The sovereign AI buildout — government AI infrastructure programmes in UAE, Saudi Arabia, France, Japan, India, and 30+ other nations each purchasing USD 1–5 billion of AI compute — is a government procurement cycle that operates independently of enterprise AI demand and provides demand visibility that semiconductor capital planning depends on.

What Is Holding This Market Back

Export controls are the most significant near-term constraint on market growth. US Department of Commerce restrictions on Nvidia H100, H800, A100, and now Blackwell GPU exports to China have eliminated China as an addressable market for Nvidia's highest-performance products — a market that represented approximately 20%–25% of Nvidia data centre revenue before controls were implemented. China's response — accelerated domestic AI chip development through Huawei Ascend, Cambricon, and Biren Technology — is both a competitive threat and a demand substitution that removes a portion of the global addressable market from Nvidia's reach. The power consumption of AI data centres is a second constraint: a single H100 GPU consumes 700W, and a 100,000-GPU training cluster requires 70 MW of power — equivalent to a small city — creating grid capacity, cooling infrastructure, and energy cost constraints that are slowing data centre AI capacity expansion independent of chip availability.

The Investment Case: Bull, Bear, and What Decides It

The bull case for AI semiconductor investment is driven by the sustained capital expenditure commitments of the four major hyperscalers. Microsoft, Google, Amazon, and Meta collectively guided for USD 300+ billion in capital expenditure in 2025, the majority directed toward AI infrastructure including servers, networking, and cooling systems that all require AI chips as the primary value component. At Nvidia's approximately 40%–45% gross margin on data centre GPU revenue, USD 100 billion in annual AI chip revenue implies USD 40–45 billion in annual operating income — a profit pool that justifies market capitalisation multiples in the USD 3–4 trillion range that Nvidia reached in 2024–2025.

The bear case argues that hyperscaler custom silicon investment will compress Nvidia's addressable market from the top of the demand curve — the largest buyers are building their own chips — while AMD's MI300X and next-generation MI400 GPUs compete for the mid-market, and Chinese domestic alternatives address the China segment. If hyperscaler custom silicon captures 30%–40% of total AI compute spending by 2028, Nvidia's addressable market shrinks even as the total AI chip market grows, potentially capping its revenue trajectory at USD 150–180 billion by 2030 rather than the USD 250+ billion that linear extrapolation of current growth implies.

Where the Next USD Billion Is Being Built

AI inference at the edge — embedding AI processing capability directly in smartphones, vehicles, cameras, and industrial sensors — is the next high-growth AI chip category. Qualcomm's Snapdragon Elite for smartphones, Apple's Neural Engine in iPhone chips, and Nvidia's Orin/Thor platform for automotive AI each represent multi-billion-dollar markets independent of data centre AI chip demand. The networking and interconnect market for AI data centres — high-bandwidth memory, InfiniBand networking, silicon photonics optical interconnects — is a USD 20+ billion market that is growing proportionally to AI chip deployments and represents differentiated exposure to AI infrastructure growth beyond GPU hardware. Memory chip manufacturers (SK Hynix, Samsung, Micron) producing high-bandwidth memory (HBM) stacked directly on AI chips are capturing value from the memory bandwidth intensity of AI workloads, with HBM revenue growing 200%+ since 2022 and representing 20%–30% of total AI chip system cost.

Market at a Glance

| Parameter | Details |

|---|---|

| Market Size 2024 | USD 43.7 billion |

| Market Size 2034 | USD 268.6 billion |

| Growth Rate | 21.6% CAGR (2026–2034) |

| Most Critical Decision Factor | Technology maturity and regulatory readiness |

| Largest Region | North America |

| Competitive Structure | Fragmented — multiple platform and specialist players |

Regional Intelligence

North America dominates AI chip design — Nvidia, AMD, Google, Amazon, Microsoft, Intel, and Qualcomm are all headquartered in the US, and the majority of AI chip intellectual property is developed in California and the broader US technology ecosystem. Manufacturing is dominated by Taiwan (TSMC, manufacturing Nvidia Blackwell, AMD MI300X, Apple Neural Engine on N3/N5 processes) and South Korea (Samsung, manufacturing some AMD and Qualcomm AI chips). The US-China AI chip export control regime has bifurcated the market: Western AI chip development is accelerating under IRA and CHIPS Act support, while Chinese domestic chip development (Huawei Ascend 910B, Biren BR100) is progressing rapidly but remains 1–2 generations behind leading Western chips on compute performance per watt. Europe has minimal AI chip development beyond Arm Holdings (UK) and a small cluster of AI chip startups (Graphcore, now owned by SoftBank), remaining primarily a consumer of US and Asian AI chip supply.

Leading Market Participants

- Nvidia

- AMD

- Qualcomm

- Cerebras Systems' wafer-scale engines

Long-Term Market Perspective

The AI chip market will consolidate around four architectural paradigms by 2030: large GPU clusters for frontier model training (dominated by Nvidia and AMD), custom hyperscaler ASICs for internal AI workloads (Google, Amazon, Microsoft), edge AI neural processors embedded in consumer and industrial devices (Qualcomm, Apple, emerging specialised players), and inference-optimised chips for enterprise AI serving (a more competitive, fragmented segment). Nvidia's market position will be determined by the pace of CUDA ecosystem migration — the longer it takes for MLIR and hardware-abstracted AI frameworks to reach production quality on non-Nvidia hardware, the longer Nvidia's software moat sustains hardware premium pricing. By 2034, AI chips will represent approximately 20%–25% of the total global semiconductor market — a structural shift that permanently elevates the economic importance of GPU and AI ASIC manufacturers relative to the CPU and memory companies that dominated semiconductor economics for the previous 30 years.

Frequently Asked Questions

Market Segmentation

Table of Contents

Research Framework and Methodological Approach

Information

Procurement

Information

Analysis

Market Formulation

& Validation

Overview of Our Research Process

MarketsNXT follows a structured, multi-stage research framework designed to ensure accuracy, reliability, and strategic relevance of every published study. Our methodology integrates globally accepted research standards with industry best practices in data collection, modeling, verification, and insight generation.

1. Data Acquisition Strategy

Robust data collection is the foundation of our analytical process. MarketsNXT employs a layered sourcing model.

- Company annual reports & SEC filings

- Industry association publications

- Technical journals & white papers

- Government databases (World Bank, OECD)

- Paid commercial databases

- KOL Interviews (CEOs, Marketing Heads)

- Surveys with industry participants

- Distributor & supplier discussions

- End-user feedback loops

- Questionnaires for gap analysis

Analytical Modeling and Insight Development

After collection, datasets are processed and interpreted using multiple analytical techniques to identify baseline market values, demand patterns, growth drivers, constraints, and opportunity clusters.

2. Market Estimation Techniques

MarketsNXT applies multiple estimation pathways to strengthen forecast accuracy.

Bottom-up Approach

Aggregating granular demand data from country level to derive global figures.

Top-down Approach

Breaking down the parent industry market to identify the target serviceable market.

Supply Chain Anchored Forecasting

MarketsNXT integrates value chain intelligence into its forecasting structure to ensure commercial realism and operational alignment.

Supply-Side Evaluation

Revenue and capacity estimates are developed through company financial reviews, product portfolio mapping, benchmarking of competitive positioning, and commercialization tracking.

3. Market Engineering & Validation

Market engineering involves the triangulation of data from multiple sources to minimize errors.

Extensive gathering of raw data.

Statistical regression & trend analysis.

Cross-verification with experts.

Publication of market study.

Client-Centric Research Delivery

MarketsNXT positions research delivery as a collaborative engagement rather than a static information transfer. Analysts work with clients to clarify objectives, interpret findings, and connect insights to strategic decisions.