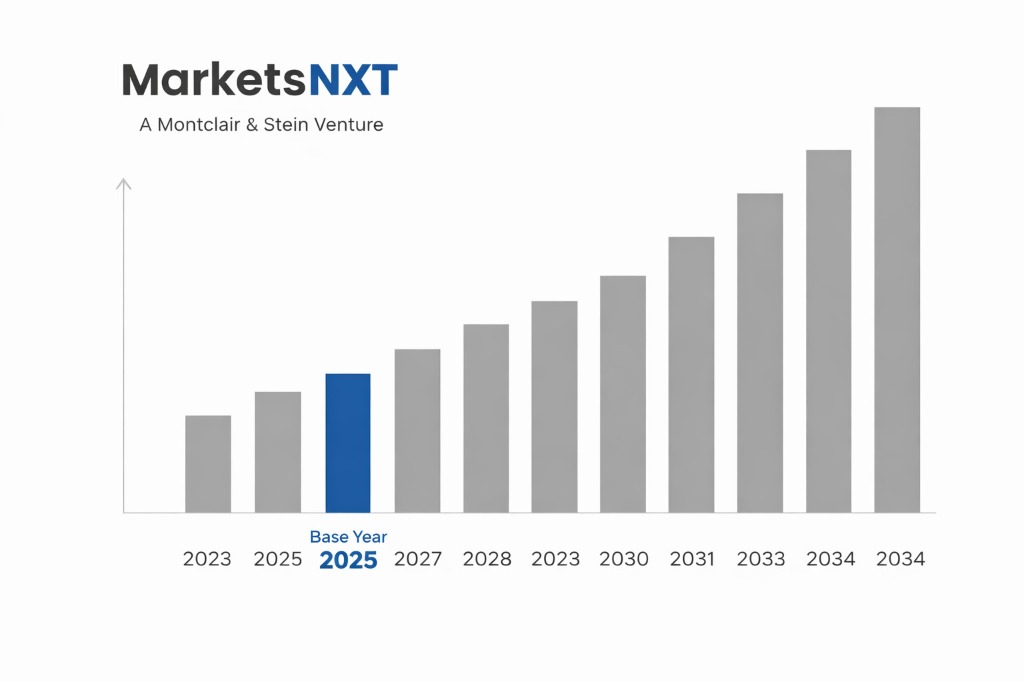

Quantum Computing Hardware Market Size, Share & Forecast 2026–2034

Report Highlights

- ✓Market Size 2024: USD 1.4 billion

- ✓Market Size 2034: USD 25.6 billion

- ✓CAGR: 35.8%

- ✓Market Definition: Quantum processing units, cryogenic control systems, quantum cloud access services, and error correction infrastructure based on superconducting qubits, trapped ions, neutral atoms, and photonic architectures for scientific research, optimisation, drug discovery, cryptography, and financial modelling applications.

- ✓Leading Companies: IBM, Google Quantum AI, IonQ, Quantinuum, PsiQuantum

- ✓Base Year: 2025

- ✓Forecast Period: 2026–2034

Who Controls This Market — And Who Is Threatening That Control

IBM has the most consistent quantum computing development programme among technology incumbents, with its roadmap from the 433-qubit Osprey (2022) to the 1,000+ qubit Condor and the modular multi-processor Heron architecture representing the most transparent public progress trajectory in the industry. IBM's Quantum Network — over 230 member organisations including Fortune 500 companies, academic research institutions, and national laboratories accessing IBM quantum systems through the cloud — creates an ecosystem of developers, researchers, and potential enterprise buyers that no pure-play quantum company has matched in breadth. Google Quantum AI's 2023 demonstration of below-threshold quantum error correction on its Sycamore processor — showing that adding more physical qubits actually reduced rather than increased logical qubit error rates for the first time — is the most significant technical milestone in the quantum computing field and positions Google as the leader in superconducting qubit error correction research.

IonQ and Quantinuum (Honeywell Quantum Solutions/Cambridge Quantum merger) represent the trapped-ion architecture camp, which uses individual charged atoms as qubits rather than superconducting circuits. Trapped-ion qubits have intrinsically higher fidelity than superconducting qubits at current system sizes — Quantinuum's H2 processor achieved 99.9% two-qubit gate fidelity, the highest of any commercial quantum processor — but are more difficult to scale to the qubit counts needed for large-scale computation. PsiQuantum is pursuing the photonic qubit approach, using single photons rather than matter-based qubits, with the ambitious claim that silicon photonic chip manufacturing enables fault-tolerant quantum processors on semiconductor fabrication lines — an approach that has not yet demonstrated a working programmable quantum circuit but is backed by USD 900 million in investment and partnerships with GlobalFoundries and SkyWater Technology.

Industry Snapshot

Quantum computers exploit quantum mechanical properties — superposition (a qubit can represent 0 and 1 simultaneously) and entanglement (qubits can be correlated in ways impossible for classical bits) — to perform certain computations exponentially faster than classical computers. Current quantum hardware (Noisy Intermediate-Scale Quantum, or NISQ, devices) has 100–2,000 physical qubits with error rates of 0.1%–1% per gate operation that limit circuit depth and the complexity of computations that can be performed reliably. Fault-tolerant quantum computing — where logical qubit error rates are suppressed below 10⁻¹⁵ through quantum error correction codes — requires approximately 1,000 physical qubits per logical qubit at current error rates, implying 1 million physical qubits for 1,000 logical qubits sufficient for problems like breaking RSA-2048 encryption or simulating molecular quantum chemistry at pharmaceutical relevance. No current development roadmap credibly reaches 1 million physical qubits with fault-tolerant error correction within the 2034 forecast horizon.

The Forces Accelerating Demand Right Now

Cryptographic threat timelines are driving government quantum computing investment independent of near-term commercial utility. The US NSA's Commercial National Security Algorithm Suite 2.0 (CNSA 2.0) mandate requiring federal agencies to transition to post-quantum cryptographic standards by 2030–2035 reflects intelligence assessments that a cryptographically relevant quantum computer (capable of breaking RSA-2048 in hours) could emerge within 10–20 years — not 30–50 years. This assessment is driving USD 3+ billion in annual US government quantum investment (NSF, DARPA, DOE, NSA, DoD) that funds hardware, software, and security research independently of commercial returns. Pharmaceutical and materials simulation is the most credible near-term commercial application — quantum simulation of molecular electronic structure for drug discovery and catalyst design is a problem where even NISQ-era quantum advantage over classical simulation methods has been demonstrated for small molecules, providing a research value proposition that justifies pharmaceutical company investment in quantum computing programmes at Johnson and Johnson, Roche, and Pfizer.

What Is Holding This Market Back

Hardware maturity is the dominant constraint. Current quantum processors require cooling to 15 millikelvin (near absolute zero) using dilution refrigerators costing USD 500,000–2 million each — a cryogenic overhead that limits scalability and creates infrastructure requirements incompatible with most enterprise deployment environments outside specialised data centres. Qubit coherence times (the duration a qubit maintains its quantum state before environmental noise corrupts it) and gate fidelities must improve by 10–100× simultaneously with qubit count to achieve fault-tolerant computation on problems of commercial relevance. The quantum software ecosystem is immature — most quantum algorithms with theoretical advantage over classical methods require fault-tolerant hardware not yet available, limiting practical quantum programming to narrow optimisation and simulation use cases that are valuable but not the transformative applications that justify market projections. Talent scarcity — quantum computing requires physicists, engineers, and software developers with skills that are produced in small numbers by a limited set of academic programmes — creates a human capital constraint that is slowing hardware and software development simultaneously.

The Investment Case: Bull, Bear, and What Decides It

The bull case acknowledges that most near-term market projections are overstated, but argues that the long-term commercial applications — cryptography, molecular simulation, financial optimisation, logistics — represent addressable markets worth USD 100+ billion annually at full quantum advantage deployment. The investment case is a venture position on a transformative technology with a 10–20-year development timeline, where current valuations of USD 500 million–USD 3 billion for leading quantum computing companies are reasonable for the option value on achieving fault-tolerant quantum advantage. The quantum cloud services market — providing access to NISQ quantum processors for research, algorithm development, and hybrid classical-quantum applications — is already a USD 1+ billion annual market growing at 30%+ annually, providing near-term revenue visibility for IBM, IonQ, and Quantinuum independent of fault-tolerant milestone achievement.

The bear case argues that quantum computing is structurally similar to nuclear fusion power — a technology with overwhelming theoretical appeal that has consistently failed to achieve commercial viability on projected timelines because fundamental engineering challenges are harder than initial optimism assumed. If the fault-tolerant quantum computing threshold requires 2040 or later to achieve, the market valuations assigned to current quantum hardware companies are 15 years ahead of commercial reality, implying significant value destruction for current investors. The decisive variable is whether any company demonstrates a fault-tolerant logical qubit with error rate below 10⁻⁶ by 2028 — the milestone that would confirm the error correction threshold is achievable and reset market expectations toward a credible commercialisation timeline.

Where the Next USD Billion Is Being Built

Post-quantum cryptography (PQC) — classical cryptographic algorithms resistant to quantum computer attacks — is a USD 3–5 billion near-term cybersecurity market driven by NIST's PQC standard finalisations (CRYSTALS-Kyber, CRYSTALS-Dilithium, SPHINCS+ standardised 2024) and the NSA CNSA 2.0 migration mandate. Every organisation transmitting sensitive data over encrypted channels must eventually transition to PQC, creating a software and hardware upgrade market that benefits quantum-adjacent cybersecurity companies including PQShield, Sandbox AQ, and IBM Security regardless of quantum hardware progress. Quantum networking — quantum key distribution and quantum repeater networks for theoretically unhackable communication — is a USD 1–2 billion near-term market driven by government and financial services demand for the highest-security communication infrastructure available.

Market at a Glance

| Parameter | Details |

|---|---|

| Market Size 2024 | USD 1.4 billion |

| Market Size 2034 | USD 25.6 billion |

| Growth Rate | 35.8% CAGR (2026–2034) |

| Most Critical Decision Factor | Technology maturity and regulatory readiness |

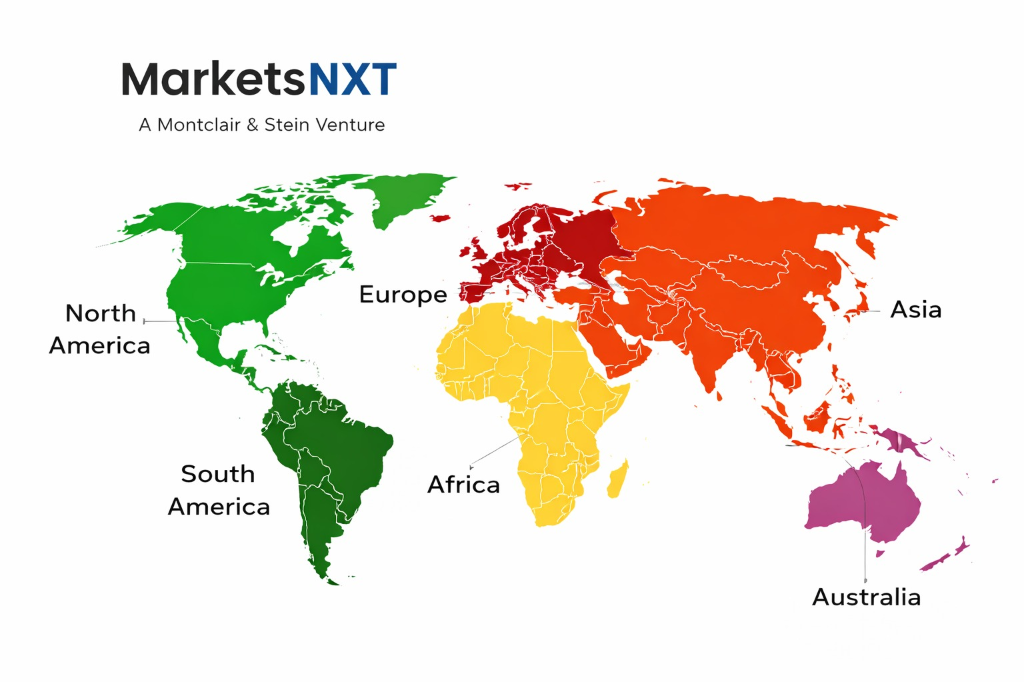

| Largest Region | North America |

| Competitive Structure | Fragmented — multiple platform and specialist players |

Regional Intelligence

North America leads in quantum computing investment, talent, and commercial deployment, with IBM, Google, Microsoft, IonQ, Rigetti, and Atom Computing all headquartered in the US and collectively receiving the majority of global quantum hardware R&D investment. The US National Quantum Initiative Act and its successor programmes have mobilised USD 3+ billion in federal funding across NIST, NSF, DOE, and DARPA. Europe has significant quantum investment through the Quantum Flagship Programme (EUR 1 billion, 2018–2027), with strong national quantum programmes in Germany (DLR Quantum Computing Initiative), the Netherlands (QuTech at TU Delft), France (Plan Quantique), and the UK (National Quantum Computing Centre). China is investing heavily in quantum computing through national strategic technology programmes, with Baidu, Alibaba (through its DAMO Academy quantum lab), and Origin Quantum developing competing hardware platforms; export controls are limiting Chinese access to US-origin quantum computing components and technology. Japan and Australia are both significant quantum research investments, with PsiQuantum's Australian quantum chip manufacturing partnership and Japan's government-backed Fujitsu and NTT quantum programmes.

Leading Market Participants

- IBM

- Google Quantum AI

- IonQ

- Quantinuum

Long-Term Market Perspective

By 2034, the quantum computing market will have demonstrated fault-tolerant logical qubit operation at small scale (100–500 logical qubits) in leading superconducting and trapped-ion systems, establishing a credible pathway to commercially relevant quantum advantage in the 2035–2040 timeframe for specific problem categories. The quantum cloud services market will have grown to USD 15–25 billion as NISQ and early fault-tolerant systems find value in hybrid classical-quantum algorithm development, pharmaceutical simulation, and quantum machine learning research. The companies that survive to the fault-tolerant era will be those that have built the software developer ecosystems, application expertise, and customer relationships that translate hardware capability into commercial solutions — a distinction that favours IBM and Google's broad platform investments over pure hardware vendors without integrated software stacks.

Frequently Asked Questions

Market Segmentation

Table of Contents

Research Framework and Methodological Approach

Information

Procurement

Information

Analysis

Market Formulation

& Validation

Overview of Our Research Process

MarketsNXT follows a structured, multi-stage research framework designed to ensure accuracy, reliability, and strategic relevance of every published study. Our methodology integrates globally accepted research standards with industry best practices in data collection, modeling, verification, and insight generation.

1. Data Acquisition Strategy

Robust data collection is the foundation of our analytical process. MarketsNXT employs a layered sourcing model.

- Company annual reports & SEC filings

- Industry association publications

- Technical journals & white papers

- Government databases (World Bank, OECD)

- Paid commercial databases

- KOL Interviews (CEOs, Marketing Heads)

- Surveys with industry participants

- Distributor & supplier discussions

- End-user feedback loops

- Questionnaires for gap analysis

Analytical Modeling and Insight Development

After collection, datasets are processed and interpreted using multiple analytical techniques to identify baseline market values, demand patterns, growth drivers, constraints, and opportunity clusters.

2. Market Estimation Techniques

MarketsNXT applies multiple estimation pathways to strengthen forecast accuracy.

Bottom-up Approach

Aggregating granular demand data from country level to derive global figures.

Top-down Approach

Breaking down the parent industry market to identify the target serviceable market.

Supply Chain Anchored Forecasting

MarketsNXT integrates value chain intelligence into its forecasting structure to ensure commercial realism and operational alignment.

Supply-Side Evaluation

Revenue and capacity estimates are developed through company financial reviews, product portfolio mapping, benchmarking of competitive positioning, and commercialization tracking.

3. Market Engineering & Validation

Market engineering involves the triangulation of data from multiple sources to minimize errors.

Extensive gathering of raw data.

Statistical regression & trend analysis.

Cross-verification with experts.

Publication of market study.

Client-Centric Research Delivery

MarketsNXT positions research delivery as a collaborative engagement rather than a static information transfer. Analysts work with clients to clarify objectives, interpret findings, and connect insights to strategic decisions.